Apple support icloud storage plans series#

That immensely technical process represents a strange series of hoops to jump through when Apple doesn't currently end-to-end encrypt iCloud photos, and could simply perform its CSAM checks on the images hosted on its servers, as many other cloud storage providers do. Those safeguards, Apple argues, will prevent any possible surveillance abuse of its iCloud CSAM detection mechanism, allowing it to identify collections of child exploitation images without ever seeing any other images that users upload to iCloud. The company declined to name its threshold for the number of CSAM images it's looking for in fact, it will likely adjust that threshold over time to tune its system and to keep its false positives to fewer than one in a trillion. Apple says this is designed to avoid false positives and ensure that it's detecting entire collections of CSAM, not single images. The second layer of encryption is designed so that the matches can be decrypted only if there are a certain number of matches. No information is revealed about hashes that don't match. The first layer of encryption is designed to use a cryptographic technique known as privacy set intersection, such that it can be decrypted only if the hash comparison produces a match. The results of those comparisons are uploaded to Apple's server in what the company calls a "safety voucher" that's encrypted in two layers. The system then compares that blind database of hashes with the hashed images on the user's device. That blinding prevents any user from obtaining the hashes and using them to skirt the system's detection. Instead, it uses some cryptographic tricks to convert them into a so-called blind database that's downloaded to the user's phone or PC, containing seemingly meaningless strings of characters derived from those hashes. Just as crucially to prevent evasion, its system never actually downloads those NCMEC hashes to a user's device. Then, like older systems of CSAM detection such as PhotoDNA, it compares them with a vast collection of known CSAM image hashes provided by NCMEC to find any matches.Īpple is also using a new form of hashing it calls NeuralHash, which the company says can match images despite alterations like cropping or colorization.

The system takes a "hash" of all images a user sends to iCloud, converting the files into strings of characters that are uniquely derived from those images. Instead it’s a clever-and complex-new form of image analysis designed to prevent Apple from ever seeing those photos unless they’re already determined to be part of a collection of multiple CSAM images uploaded by a user. I'm terrified about what that's going to look like.”Īpple’s new system isn’t a straightforward scan of user images, either on the company’s devices or on its iCloud servers. “The pressure is going to come from the UK, from the US, from India, from China. That feature will use a cryptographic process that takes place partly on the device and partly on Apple's servers to detect those images and report them to the National Center for Missing and Exploited Children, or NCMEC, and ultimately US law enforcement.

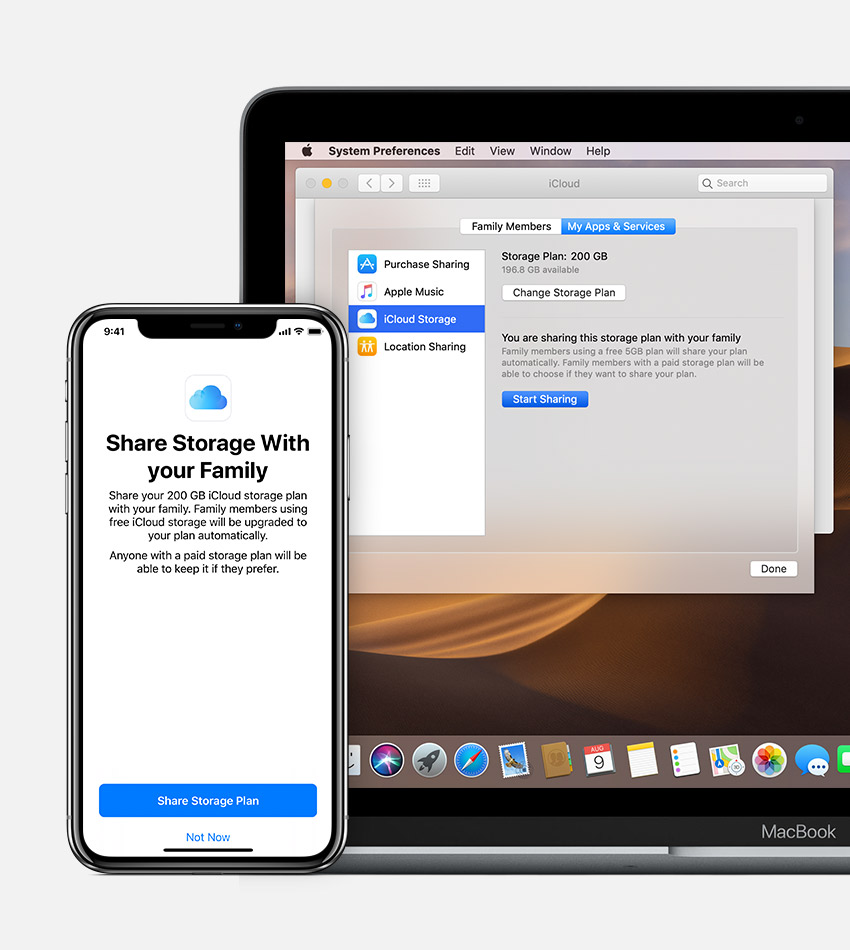

Siri and search will now display a warning if it detects that someone is searching for or seeing child sexual abuse materials, also known as CSAM, and offer options to seek help for their behavior or to report what they found.īut in Apple's most technically innovative-and controversial-new feature, iPhones, iPads, and Macs will now also integrate a new system that checks images uploaded to iCloud in the US for known child sexual abuse images. The system can also block those images from being sent or received, display warnings, and in some cases alert parents that a child viewed or sent them. A new opt-in setting in family iCloud accounts will use machine learning to detect nudity in images sent in iMessage. Today Apple introduced a new set of technological measures in iMessage, iCloud, Siri, and search, all of which the company says are designed to prevent the abuse of children. In doing so, it's also driven a wedge between privacy and cryptography experts who see its work as an innovative new solution and those who see it as a dangerous capitulation to government surveillance.

Now Apple is debuting a new cryptographic system that seeks to thread that needle, detecting child abuse imagery stored on iCloud without-in theory–introducing new forms of privacy invasion. For years, tech companies have struggled between two impulses: the need to encrypt users' data to protect their privacy and the need to detect the worst sorts of abuse on their platforms.